May 5, 2026

ai-outcomes-made-simple-it-starts-with-trusted-organisational-knowledge

Guest Blogger Konstantinos Christodoulakis

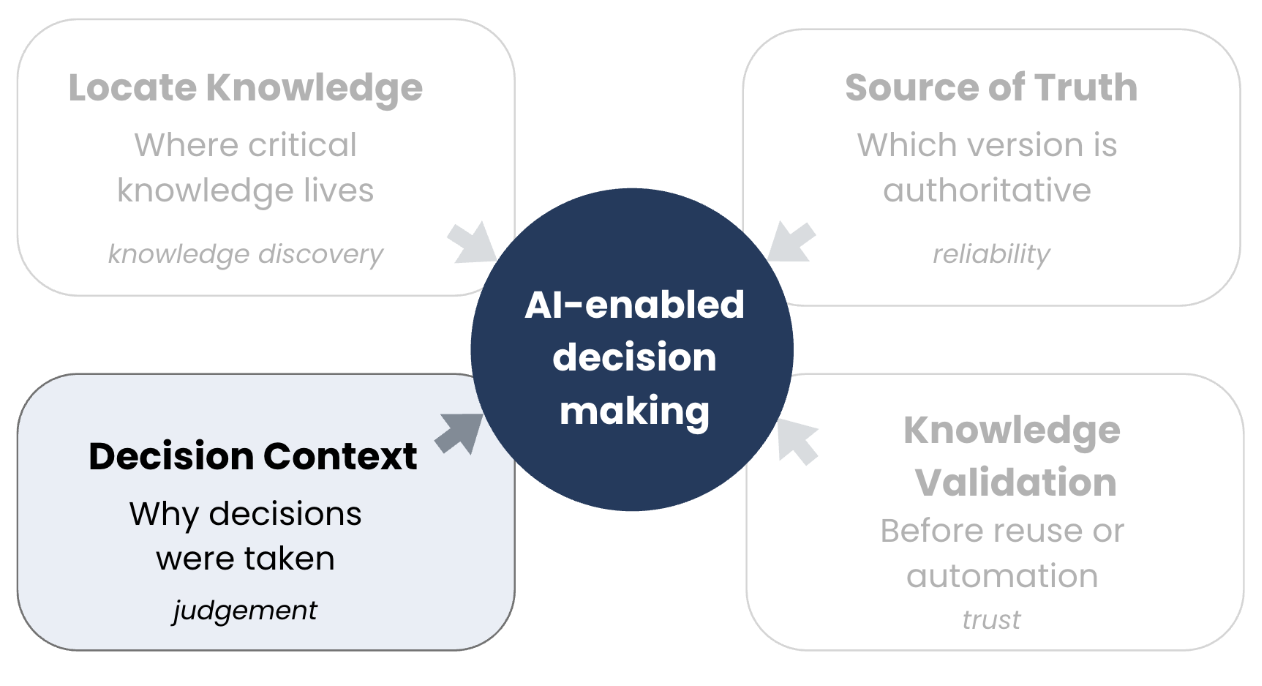

AI Outcomes Made Simple: It Starts with Trusted Organisational Knowledge

April 28, 2026

how-to-manage-the-risks-of-user-generated-content-in-the-enterprise

Guest Blogger Devin Partida

How to Manage the Risks of User-Generated Content in the Enterprise

April 21, 2026

from-content-libraries-to-intelligent-knowledge-systems---leading-the-future-of-km

Top Guest Blogger Ekta Sachania

From Content Libraries to Intelligent Knowledge Systems – Leading the Future of KM

March 24, 2026

knowledge-ambassadors-the-missing-link-in-knowledge-management-programs

With Guest Blogger Najla Alotaibi

Knowledge Ambassadors: The Missing Link in Knowledge Management Programs

March 17, 2026

whats-in-your-km-go-bag-spoiler-its-not-a-chatbot

Brittany Persinger

What’s in Your KM Go Bag? (Spoiler: It’s Not a Chatbot)

March 13, 2026

conversational-leadership-expanding-the-future-of-knowledge-management

KMI Instructor John Hovell

Conversational Leadership: Expanding the Future of Knowledge Management

February 12, 2026

improving-the-front-end-experience-of-your-knowledge-systems

KMI Guest Blogger Devin Partida

Improving the Front-End Experience of Your Knowledge Systems

February 11, 2026

how-to-build-a-knowledge-management-strategy-for-a-new-venture

KMI Guest Blogger Devin Partida

How to Build a Knowledge Management Strategy for a New Venture

January 31, 2026

sparking-the-knowledge-management-engine-with-an-ai-centre-of-excellence

KMI Instructor Rooven Pakkiri

Sparking the Knowledge Management Engine with an AI Centre of Excellence

January 30, 2026

knowledge-management-that-works-for-councils-and-local-government

Guest Blogger Roger Haddon

Knowledge Management That Works for Councils and Local Government

January 13, 2026

overcoming-km-challenges-with-ai-innovations

Ekta Sachania

Overcoming KM Challenges with AI Innovations

December 24, 2025

what-is-ai-driven-knowledge-management-and-how-does-it-change-the-role-of-knowledge-workers

Lucy Manole

What Is AI-Driven Knowledge Management and How Does It Change the Role of Knowledge Workers?

December 18, 2025

when-disruption-hits-home-my-indigo-experience-and-the-km-lessons-we-ignore

Top Guest Blogger Ekta Sachania

When Disruption Hits Home: My IndiGo Experience and the KM Lessons We Ignore

December 9, 2025

2026-the-year-km-gets-re-imagined

Top Guest Blogger Ekta Sachania

2026: The Year KM Gets Re-Imagined

November 14, 2025

the-intersection-of-process-mining-and-knowledge-management

The Intersection of Process Mining and Knowledge Management

November 12, 2025

the-km-leaders-guide-to-fostering-a-culture-of-contribution

Devin Partida

The KM Leader's Guide to Fostering a Culture of Contribution

October 2, 2025

onboarding-offboarding-a-continuous-km-lifecycle

Top KMI Guest Blogger Ekta Sachania

Onboarding & Offboarding: A Continuous KM Lifecycle

September 18, 2025

how-to-write-clear-and-concise-knowledge-base-articles-for-better-customer-support

Lucy Manole

How to Write Clear and Concise Knowledge Base Articles for Better Customer Support

September 17, 2025

the-new-information-governance-integrating-personal-security-into-corporate-km-policy

Devin Partida

The New Information Governance: Integrating Personal Security into Corporate KM Policy

July 30, 2025

how-to-safeguard-critical-knowledge-assets-before-during-and-after-a-crisis

Amanda Winstead

How To Safeguard Critical Knowledge Assets Before, During, and After a Crisis

July 15, 2025

cultural-dimensions-of-knowledge-exchange-building-inclusive-participation-models

Devin Partida

Cultural Dimensions of Knowledge Exchange: Building Inclusive Participation Models

July 14, 2025

the-role-of-knowledge-stewards-in-safeguarding-organizational-intelligence

Devin Partida

The Role of Knowledge Stewards in Safeguarding Organizational Intelligence

July 5, 2025

when-systems-fail-what-a-crisis-teaches-us-about-knowledge-management

Top KMI Blogger Ekta Sachania

When Systems Fail: What a Crisis Teaches Us About Knowledge Management

July 1, 2025

beyond-metrics-the-hidden-roi-of-knowledge-management

Ekta Sachania

Beyond Metrics: The Hidden ROI of Knowledge Management

June 8, 2025

the-au

Rooven Pakkiri

Why is AI and Knowledge Management so Symbiotic?

May 19, 2025

the-role-of-knowledge-management-in-streamlining-compliance-processes

Devin Partida

The Role of Knowledge Management in Streamlining Compliance Processes

May 16, 2025

how-effective-knowledge-management-can-enhance-nonprofit-impact

Devin Partida

How Effective Knowledge Management Can Enhance Nonprofit Impact

May 15, 2025

best-practices-for-financial-knowledge-management-within-organizations

Devin Partida

Best Practices for Financial Knowledge Management Within Organizations

May 3, 2025

innovation-doesnt-just-happen-in-strategy-rooms----its-born-in-the-bylanes-of-experience

Ekta Sachania

Innovation Doesn’t Just Happen in Strategy Rooms — it’s Born in the Bylanes of Experience

April 25, 2025

km-content-lifecycle-continuous-improvement-framework

Ekta Sachania

KM Content Lifecycle: Continuous Improvement Framework

April 16, 2025

best-practices-for-documenting-and-managing-employee-knowledge-in-hr

Devin Partida

Best Practices for Documenting and Managing Employee Knowledge in HR

April 15, 2025

the-biggest-challenge-of-knowledge-management-km

Dr. Mustafa Hafizoglu; Length: ~400 words, 2 min read.

The Biggest Challenge of Knowledge Management (KM)

April 7, 2025

why-change-management-needs-knowledge-management-a-strategic-partnership-for-sustainable-transformation

Ekta Sachania

Why Change Management Needs Knowledge Management: A Strategic Partnership for Sustainable Transformation

March 12, 2025

from-chaos-to-clarity-how-knowledge-management-powers-winning-proposals-in-presales

From Chaos to Clarity: How Knowledge Management Powers Winning Proposals in Presales

March 3, 2025

knowledge-management-in-the-age-of-ai-challenges-and-opportunities

Harikrishna Kundariya

Knowledge Management in the Age of AI: Challenges and Opportunities

March 3, 2025

escaping-the-definition-trap-leveraging-knowledge-for-clarity-and-success

Dr. Mustafa Hafizoglu

Escaping the Definition Trap: Leveraging Knowledge for Clarity and Success

February 13, 2025

from-data-to-wisdom-using-ai-to-strengthen-knowledge-management-strategies

Guest Blogger Amanda Winstead

From Data to Wisdom: Using AI to Strengthen Knowledge Management Strategies

January 13, 2025

bridging-the-gap-how-knowledge-managers-embody-the-spirit-of-trailblazers

KMI Top Blogger Ekta Sachania

In today’s fast-evolving and highly competitive global business landscape, organizations need leaders who can navigate change, drive innovation, and foster growth. Trailblazers are those rare individuals who create transformative pathways for others to follow. While the concept of a trailblazer often conjures up images of visionaries or entrepreneurs, it also aligns closely with the role of a Knowledge Manager. A Knowledge Manager (KM) is not just a custodian of information but a strategic driver of change, efficiency, and innovation.

Bridging the Gap: How Knowledge Managers Embody the Spirit of Trailblazers

April 26, 2019

video-km-showcase-2019-recap

Admin

Video: KM Showcase 2019 Recap

January 2, 2018

kmi-interviews-with-recent-ckm-students

Admin

KMI Interviews with Recent CKM Students

April 3, 2018

what-is-knowledge-management-and-why-is-it-important

Zach Wahl | President and CEO, Enterprise Knowledge

What is Knowledge Management and Why Is It Important?

May 16, 2018

can-km-be-your-superpower

Vanessa DiMauro | Leader Networks

Can KM Be Your Superpower?

June 11, 2018

tips-from-a-veteran-knowledge-management-practitioner

Admin

Tips from a Veteran Knowledge Management Practitioner

August 13, 2018

what-we-learned-from-running-a-km-world-cup

Ilana Botha | Moorhouse Consulting

What We Learned from Running a KM "World Cup"

November 14, 2018

case-study-revitalizing-a-law-firm-intranet

Oz Benamram | CKO, White and Case LLP

Case Study: Revitalizing a Law Firm Intranet

March 28, 2019

video-collective-intelligence

Stephanie Barnes

Video: Collective Intelligence

March 12, 2020

reflections-on-km-showcase-2020

Liz Herman, PhD PMP CPTC | Senture, LLC

Reflections on KM Showcase 2020

April 14, 2020

keynote-presentation-from-km-showcase-km2020-and-beyond

Admin

Keynote Presentation from KM Showcase: "KM2020 and Beyond"

July 29, 2020

how-knowledge-management-helps-make-remote-work-work

CKM Grad Enene Ejembi

How Knowledge Management Helps Make Remote Work, Work

August 14, 2020

four-key-reasons-why-organizations-should-manage-their-knowledge

KMI Guest Blooger Dr. Randhir Pushpa

Four Key Reasons Why Organizations Should Manage Their Knowledge

January 7, 2021

unlocking-tacit-knowledge-in-knowledge-management

KMI Guest Blogger Ekta Sachania

Unlocking Tacit Knowledge in Knowledge Management

March 25, 2021

how-is-knowledge-management-revolutionizing-the-health-care-industry

KMI Guest Blogger Ekta Sachania

How is Knowledge Management Revolutionizing the Health Care Industry

February 10, 2021

the-importance-of-knowledge-management-in-marketing

KMI Guest Blogger Marie Barnes

The Importance of Knowledge Management in Marketing

March 8, 2021

the-goal-of-knowledge-management

KMI Guest Blogger Emily Harrinson

The Goal of Knowledge Management

April 15, 2021

5-effective-books-on-knowledge-management

KMI Guest Blogger Lauren Groff

5 Effective Books On Knowledge Management

April 28, 2021

knowledge-management-and-seo-5-things-to-know

KMI Guest Blogger Nancy Howard

Knowledge Management and SEO: 5 Things To Know

April 29, 2021

writing-knowledge-management-rules-6-essential-steps

KMI Guest Blogger Jessica Fender

Writing Knowledge Management Rules: 6 Essential Steps

May 10, 2021

quick-guide-on-5-knowledge-management-examples

KMI Guest Blogger Regina Wheeler

Quick Guide On 5 Knowledge Management Examples

May 13, 2021

what-s-changed-in-knowledge-management-since-2020-the-era-of-covid

KMI Guest Blogger Estella Friesen

What’s Changed in Knowledge Management Since 2020: The Era of Covid

May 20, 2021

career-opportunities-in-the-field-of-knowledge-management

KMI Guest Blogger Elizabeth Hines

Career Opportunities in the Field of Knowledge Management

June 22, 2021

the-potential-for-knowledge-management-in-smart-cities

KMI Guest Blogger Alicia Thompson

The Potential for Knowledge Management in Smart Cities

June 9, 2021

student-internship-in-knowledge-management-in-2021

KMI Guest Blogger Emily Harrinson

Student Internship In Knowledge Management In 2021

June 16, 2021

6-best-practices-of-knowledge-management-for-2021

KMI Guest Blogger Prabhjot Singh

6 Best Practices of Knowledge Management for 2021

September 28, 2021

successes-and-failures-of-knowledge-management

KMI Guest Blogger Wanda Lafond

Successes and Failures of Knowledge Management

October 8, 2021

the-practicality-of-knowledge-management-in-health-today

KMI Guest Blogger Amanda Winstead

The Practicality of Knowledge Management in Health Today

October 26, 2021

a-complete-guide-on-knowledge-management-crm-as-a-support-for-businesses-in-2021

KMI Guest Blogger Dur-e-Sabih

A Complete Guide on Knowledge Management & CRM as a Support for Businesses In 2021

December 15, 2021

a-superhero-s-guide-to-knowledge-management

KMI Guest Blogger David Newstead, CKM, PMP

A Superhero’s Guide to Knowledge Management

January 11, 2022

forming-and-leading-a-grassroots-knowledge-management-movement

CKM Grad and Master CKM Candidate Tim Billbrough

Forming and Leading a Grassroots Knowledge Management Movement

February 26, 2022

perfecting-competency-based-km

KMI Guest Blogger Michael Sequeira

Perfecting Competency-Based KM

February 1, 2022

in-conversation-with-km

KMI Guest Blogger Michael Sequeira

In Conversation with KM

March 25, 2022

learn-to-ace-it-with-knowledge-management

KMI Guest Blogger Michael Sequeira

Learn to ACE-IT with Knowledge Management

March 31, 2022

explaining-knowledge-management-it-s-importance-use-cases-and-types

KMI Guest Blogger Sasi Dharan

Explaining Knowledge Management; It's Importance, Use Cases and Types

April 6, 2022

shift-toward-the-abcde-of-knowledge-management

KMI Guest Blogger Michael Sequeira

Shift Toward the 'ABCDE' of Knowledge Management

March 25, 2022

role-of-a-knowledge-management-system-in-the-healthcare-industry

KMI Guest Blogger Sowmya Juttukonda

Role of a Knowledge Management System in the Healthcare Industry

July 13, 2022

knowledge-management-for-risk-reduction

KMI Guest Blogger Amanda Winstead

Knowledge Management For Risk Reduction

July 18, 2022

tips-to-implement-a-knowledge-management-system-within-a-budget

KMI Guest Blogger Mary Baker

Tips To Implement A Knowledge Management System Within A Budget

August 11, 2022

the-relevance-of-knowledge-management-to-organizations

KMI Guest Blogger Eric Harris

The Relevance of Knowledge Management to Organizations

November 24, 2022

how-effective-knowledge-management-affects-your-company-s-profits

KMI Guest Blogger Kristen Bray

How Effective Knowledge Management Affects Your Company’s Profits

January 22, 2023

how-km-is-driven-through-business-storytelling

KMI Guest Blogger Michael Sequeira

How KM is Driven Through Business Storytelling

January 28, 2023

the-elevator-pitch-and-km-what-s-the-connection

KMI Guest Blogger Michael Sequeira

The Elevator Pitch and KM - What's the Connection?

January 19, 2023

km-training-super-workforce

KMI Guest Blogger Anand Subramaniam

KM + Training = Super-Workforce

February 26, 2023

knowledge-management-and-resilience

KMI Guest Blogger Stephanie Barnes

Knowledge Management and Resilience

March 21, 2023

the-different-roles-in-a-knowledge-management-team

KMI Guest Blogger Gary Wyatt

The Different Roles in a Knowledge Management Team

April 23, 2023

how-the-decay-of-institutional-knowledge-affects-the-growth-of-an-organization

KMI Guest Blogger Ekta Sachania

How the Decay of Institutional Knowledge Affects the Growth of an Organization

June 1, 2023

you-are-a-knowledge-manager-well-what-does-that-even-mean

KMI Guest Blogger EKta Sachania

You are a Knowledge Manager? Well, What Does That Even Mean?

August 4, 2023

tacit-knowledge-why-and-how-to-capture-it

KMI Guest Blogger Ekta Sachania

Tacit Knowledge: Why and How to Capture It

August 7, 2023

identifying-kpis-for-your-knowledge-management-program

KMI Guest Blogger Ekta Sachania

Identifying KPIs for your Knowledge Management Program

September 12, 2023

the-knowledge-management-services-menu-card

KMI Guest Blogger Ekta Sachania

The Knowledge Management Services Menu Card

November 1, 2023

leveraging-knowledge-management-to-detect-and-address-employee-burnout

KMI Guest Blogger Amanda Winstead

Leveraging Knowledge Management to Detect and Address Employee Burnout

December 20, 2023

integrating-knowledge-management-in-pre-employment-screening

KMI Guest Blogger Amanda Winstead

Integrating Knowledge Management in Pre-Employment Screening

February 19, 2024

creating-knowledge-through-storytelling

KMI Guest Blogger Ekta Sachania

Creating Knowledge Through Storytelling

February 17, 2024

what-s-on-the-knowledge-management-menu-the-km-services-menu-card

KMI Guest Blogger Ekta Sachania

What's on the Knowledge Management Menu? The KM Services Menu Card...

March 7, 2024

how-knowledge-management-can-set-up-organizations-for-sustainable-success

KMI Guest Blogger Ekta Sachania

How Knowledge Management Can Set up Organizations for Sustainable Success

March 17, 2024

optimizing-organization-functions-with-knowledge-management-part-1

KMI Guest Blogger Ekta Sachania

Optimizing Organization Functions with Knowledge Management (Part 1)

August 28, 2024

the-importance-of-knowledge-management-in-heavy-industries

KMI Guest Blogger Amanda Winstead

The Importance of Knowledge Management in Heavy Industries

October 28, 2024

harnessing-the-power-of-knowledge-management-in-consulting-firms

KMI Top Blogger, Ekta Sachania

Harnessing the Power of Knowledge Management in Consulting Firms

October 9, 2024

maximizing-project-efficiency-through-knowledge-management-and-targeted-training

KMI Guest Blogger Amanda Winstead

Maximizing Project Efficiency Through Knowledge Management and Targeted Training

.png)

.png)

.png)

.png)